Hi Everyone,

Sure you've seen this story.

Prospect: How to convince my boss (CIO etc.) that we are buying the right edition of Acumatica Cloud ERP?

Sales guy: Errr, mmmm, well... you should trust me...

Prospect: Ok, just explain why do we need 4 CPU not 2? We only have 3 companies, we can get 2 CPU, right? Why do you offer us 4 CPU license????

Sales guy: Errr, mmmm, well... where's the hell is the tech guy...?

So, here is a helping hand to our sales team.

What I did - took one of our big customers and analyzed the database transactions, users and payload.

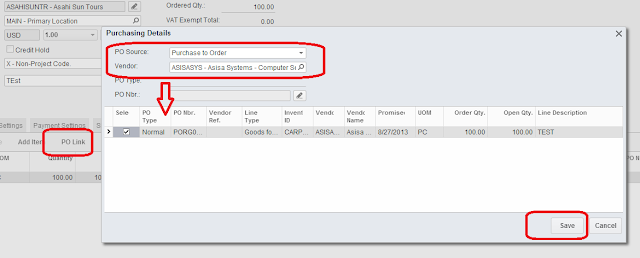

It is a Departmental Edition with 2 CPUs for WEB server, 2CPU for SQL server, 4GB shared RAM. Sitting on a VERY FAST Hyper-V virtual machine (that is sharing SQL) and supplied with SSD hard drives. So we can say these CPU are ideal CPU for calculation of the capacity limits. :)

If you are not interested in technical part, just scroll till the big font area, then later just copy paste pictures to your PPTs when needed, especially the skyscrapers one.

The rest of the team can stay ;)

First of all, what WEB server CPU does at Acumatica - it

processes Business Logic.

If it does not ring the bell... CPU is processing what you have entered into the screen, then validating that input, calculating some formulas and finally sending data back to your browser or to the SQL database.

Analogue, back to 60th, CPU is - the engine in your electrical "typewriting machine", and if it runs slow, even when

you typeveryfast it willnotprocess the data, but will either queue them and type after you finished pressing buttons (good typewrites) or simply jam the letters :) (lousy typo). Well, of course Acumatica is a good one, it never jams! :)

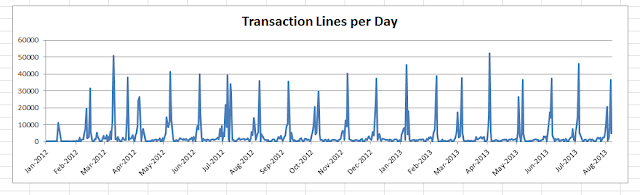

Back to the test, here is the daily transactional load for the past two years.

I can see clear peeks at some days up to 50,000 transaction lines per day. While the other days it can be less that 100. So our CPU must be able to withstand these peaks. 50,000 per day could be over 8 hours equally or just in 1 minute :). SO these aggregated data are nice looking but can't determine what CPU actually does and how powerful it should be.

Lets take a closer look at transactions per minute,

to see actual peaks.

Data became more realistic, at the day highlighted in red we had some peaks when users entered 6000 to 8000 transaction lines per minute. Lets take a closer look at that specific day.

And now lets put the first peak under the microscope.

Here we can see that Acumatica processes data with 5,000 records per minute rate, placing 5000+5000+4000+1000 into the queue, within 3 minutes. So the actual processing rate would be something around 15,000 transaction lines per 3 minutes, or about 5,000 transaction lines per minute, when data supplied in Large chunks.

At the same time, we can see that at 9:48PM system processed 8,000 records at peak. At that time amount of data was smaller or, may be CPU was less loaded with other non transactional tasks.

Anyway, for us it is a very good indication, Acumatica can process 5,000 to 8,000 transaction lines (rows) per minute. For 2 CPUs it will be 2,500 to 4,000 transaction lines per CPU per minute. Here we can break it down to per second and will get gold:

One CPU can process 40 to 65 transaction lines per second at max.

Of course I assume this is a dedicated CPU core.

It can also be Virtual, but Server will EAT IT UP FULL om-nom at such peak loads. So it will become Virtual ate Real. :)

One of the examples could be Distribution based company processing large volume of Sales every day. So what it means to this company:

if sales order is around 20 lines each, then:

Single CPU can process 2 - 3 Sales order per second. Max.

Lets now think about users prospective, how many users can work in the system.

Answer is - This really - DOES NOT MATTER :) The only thing that matters is how many transactions these users do.

But, anyway, going to my example, here is the number of NAMED USERS worked with Acumatica during company life. We do not license by those, but

I was just interested how many users were creating such a traffic jam :)

Well we can see that at peaks there where 40-45 physical persons in the office :) using Acumatica. Very good. We can clearly see the weekends :). Notice Implementation stage :). And then Go-live and after go-live. As well as steady operations part later. Nice... Looks like a comb...

Another metric we can use.

Number or Operations. Which is, log in, open screen or report. I am not talking about operations within the screen which can be treated as transaction, but, well, errr, mmmm. :)

Operations like

JUST OPEN SCREEN OR REPORT OR HELP etc.

What I see here - is how difficult was 2012 Year Closing process :) And when team went on holiday after it ;) Look at it in details, see how difficult it was. Below are Operations Per Day.

Some days were 6,000 screen openings and logins PER DAY. By just 30 people. 200 screens were opened by a SINGLE person per day in average. Think about it...

Conclusion is:

Number of Users DOES NOT MATTER AT ALL, the only thing that is important NUMBER OF TRANSACTIONS at its PEAK. PER SECOND.

Single CPU can process 40 to 65 TRANSACTION LINES per second.

Single CPU can process 2-3 Sales Order 20 lines each per second.

For distribution based business, translated to Editions it will be:

Departmental = 4-6 SO per second,

Divisional 8-12 SO per second,

Enterprise 16-24 SO per second.

For other industries just take peak load, calculate number of transaction lines per second, compare with what mentioned above.

Well, all this in assumption that you will not design other CPU hungry logic on reports or BI.

With more data available for analysis from our customers I will be able to estimate the impact of it as well.

Cheers,

Sergey.